How to Set Up Snowflake Cortex Analyst

Last updated Apr 29, 2026

What Snowflake Cortex Analyst Does

Snowflake Cortex Analyst is a managed service built into the Snowflake Data Cloud that translates plain-English questions into SQL queries and returns direct answers. A business user can type "What was total revenue by region last quarter?" into a chat interface and receive an accurate result without touching a SQL editor. The feature became generally available in 2025 and by 2026 has been deployed across thousands of Snowflake accounts as a primary self-service analytics layer.

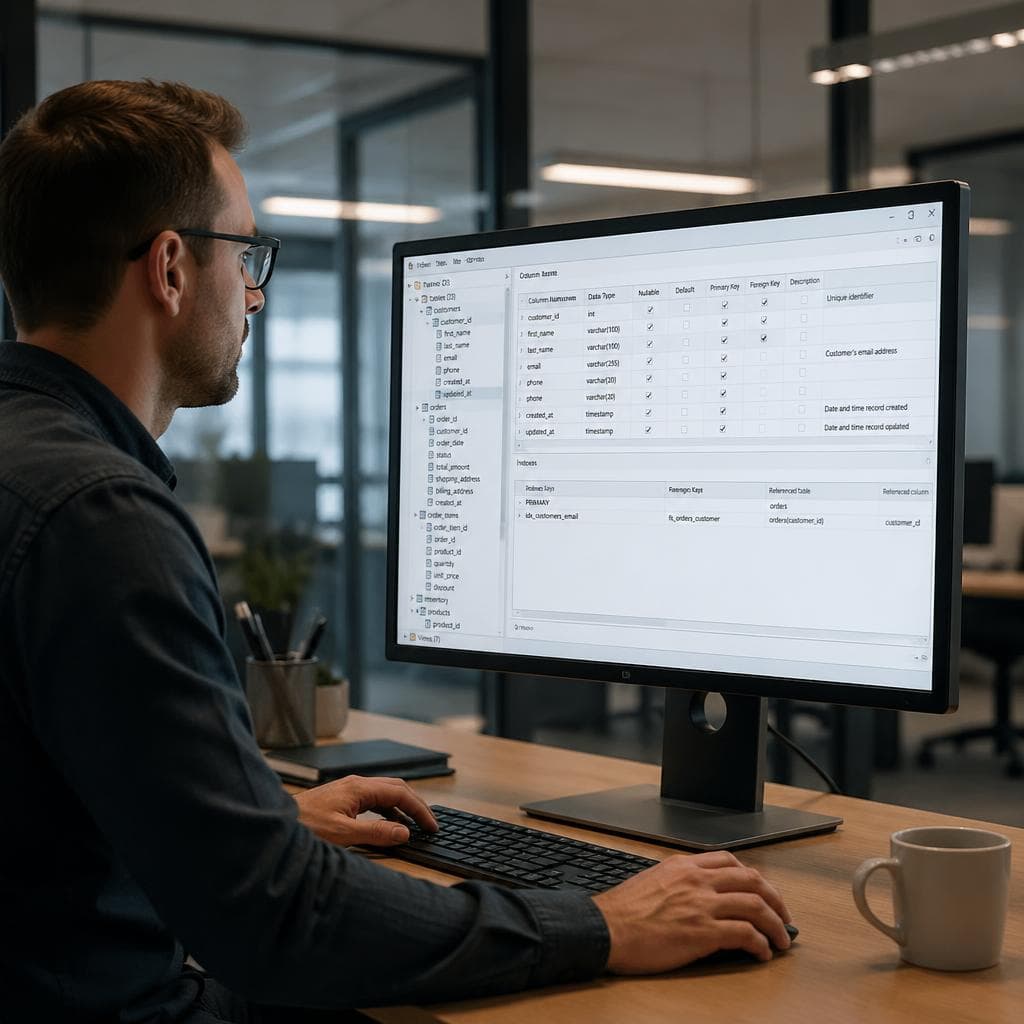

The setup involves two main components: a semantic model YAML file that encodes your table structure and business terminology, and a chat interface built with Snowflake-native Streamlit. A standard deployment for one or two tables takes two to three hours. Most of that time is spent writing clear column descriptions in the YAML file, which directly determines how accurately the model answers questions.

Prerequisites

Before starting, confirm you have the following in place.

A Snowflake account with SYSADMIN or ACCOUNTADMIN privileges is required. Standard analyst roles do not have the permissions needed to create stages or grant Cortex access during initial setup.

At least one table with structured data already loaded into Snowflake is needed. Cortex Analyst generates SQL against relational tables with named columns. It does not read from semi-structured JSON blobs or external files directly.

Python 3.8 or later is useful if you want to test the setup via the REST API from your local machine. If you plan to build entirely within Snowflake-native Streamlit, no local Python environment is required.

No data engineering background is needed to complete this guide. You need to understand your data well enough to describe its columns in plain English, which is the same level of knowledge any working analyst already has.

Step 1: Create the Storage Stage

Cortex Analyst reads your semantic model from an internal Snowflake stage. Open a Snowflake SQL worksheet and run these commands:

CREATE DATABASE IF NOT EXISTS cortex_demo;

CREATE SCHEMA IF NOT EXISTS cortex_demo.analytics;

CREATE STAGE IF NOT EXISTS cortex_demo.analytics.cortex_stage;

CREATE WAREHOUSE IF NOT EXISTS cortex_wh

WAREHOUSE_SIZE = 'X-SMALL'

AUTO_SUSPEND = 60

AUTO_RESUME = TRUE;

An X-Small warehouse is sufficient for testing and single-user workloads. Resize to Small or Medium when multiple users will be querying concurrently.

Step 2: Write the Semantic Model YAML

The semantic model is the most important configuration file in this setup. It tells Cortex Analyst what your tables contain, what each column means in business language, what values categorical columns hold, and which aggregations are valid. Poor descriptions here are the primary cause of inaccurate answers.

Here is a minimal example for a sales orders table:

name: sales_model

tables:

- name: orders

base_table:

database: cortex_demo

schema: analytics

table: orders

description: "Contains one row per customer order placed through the platform."

columns:

- name: order_date

description: "The date the order was placed by the customer."

data_type: date

- name: revenue

description: "Total order value in USD including taxes, before any refunds."

data_type: number

- name: region

description: "Geographic sales region for the order."

data_type: text

sample_values:

- "North America"

- "Europe"

- "APAC"

- "LATAM"

metrics:

- name: total_revenue

description: "Sum of revenue across all orders."

expr: SUM(revenue)

data_type: number

Three practices consistently improve accuracy: write every column description as a complete sentence; include sample_values for all categorical columns so the model knows what values to filter on; and state the grain of the table explicitly in the table-level description field (one row per order, one row per session, one row per user).

Save the file as sales_model.yaml and upload it to the stage:

PUT file:///local/path/to/sales_model.yaml @cortex_demo.analytics.cortex_stage OVERWRITE = TRUE;

Step 3: Test the Connection with the REST API

Before building a user interface, confirm that Cortex Analyst returns correct answers against your model. The fastest test is a direct REST API call using a Snowflake session token, which you can generate via the SnowSQL CLI or Snowflake Python connector.

import requests, json

account = "your-account-identifier"

token = "your-session-token"

url = f"https://{account}.snowflakecomputing.com/api/v2/cortex/analyst/message"

payload = {

"messages": [

{"role": "user", "content": [{"type": "text", "text": "What was total revenue last month?"}]}

],

"semantic_model_file": "@cortex_demo.analytics.cortex_stage/sales_model.yaml"

}

headers = {"Authorization": f"Bearer {token}", "Content-Type": "application/json"}

resp = requests.post(url, headers=headers, json=payload)

print(json.dumps(resp.json(), indent=2))

A successful response includes both the generated SQL and a plain-language answer. If the response is wrong or empty, review your YAML first. Column descriptions that are too short or too generic account for the majority of failed queries.

Step 4: Build a Chat Interface with Snowflake-Native Streamlit

Snowflake-native Streamlit lets you build a shareable chat UI entirely inside Snowflake without managing external infrastructure. In the Snowflake web interface, navigate to Projects in the left menu, click Streamlit, then select the + Streamlit App button.

Paste this starter code into the editor:

import streamlit as st

from snowflake.snowpark.context import get_active_session

import json

session = get_active_session()

st.title("Ask Your Data")

if "messages" not in st.session_state:

st.session_state.messages = []

question = st.chat_input("Ask a question about your data")

if question:

st.session_state.messages.append({"role": "user", "content": question})

result = session.sql(

"SELECT SNOWFLAKE.CORTEX.ANALYST(?, ?)",

params=[question, "@cortex_demo.analytics.cortex_stage/sales_model.yaml"]

).collect()

answer = json.loads(result[0][0])

answer_text = answer.get("message", {}).get("content", [{}])[0].get("text", "No answer.")

st.session_state.messages.append({"role": "assistant", "content": answer_text})

for msg in st.session_state.messages:

with st.chat_message(msg["role"]):

st.write(msg["content"])

Click Run, then share the Streamlit app URL with your team. Business users can ask questions immediately without SQL knowledge or access to Snowflake's query editor.

Step 5: Improve Answer Accuracy with Verified Queries

Cortex Analyst handles a wide range of questions on well-described tables out of the box. Adding verified queries to your semantic model pushes accuracy further by giving the model explicit examples of your specific business terminology and the most common question patterns.

A verified query pairs a natural-language question with the correct SQL. Add a verified_queries block to your YAML file:

verified_queries:

- name: revenue_last_month

question: "What was total revenue last month?"

sql: |

SELECT SUM(revenue) AS total_revenue

FROM orders

WHERE DATE_TRUNC('month', order_date) =

DATE_TRUNC('month', DATEADD('month', -1, CURRENT_DATE))

- name: revenue_by_region_ytd

question: "What is revenue by region this year?"

sql: |

SELECT region, SUM(revenue) AS total_revenue

FROM orders

WHERE YEAR(order_date) = YEAR(CURRENT_DATE)

GROUP BY region

ORDER BY total_revenue DESC

According to Snowflake's Verified Query Repository documentation, adding five to ten verified queries per table measurably improves accuracy for those question types and helps the model generalize to similar questions it has not seen before.

What Cortex Analyst Does Not Cover

Cortex Analyst answers point-in-time questions against structured tables. It does not render charts, track metrics over time automatically, or alert when values change. For visual reporting, a BI layer on top is still required, whether that is Streamlit with a charting library, Tableau, or another tool.

It also does not work on unstructured data. PDFs, email bodies, and free-text fields in database columns require Cortex Search, a separate Snowflake feature that uses semantic retrieval rather than SQL generation.

For teams that want to skip warehouse configuration entirely and ask natural-language questions directly from a CSV file upload, VSLZ handles the same workflow with no Snowflake setup required.

Summary

Cortex Analyst is one of the faster ways to give non-technical stakeholders direct access to Snowflake data without building a custom query layer. The setup involves a stage, a semantic model YAML, and a Streamlit interface. Ongoing maintenance means keeping column descriptions accurate as tables evolve and adding verified queries as recurring question types emerge. A practical first step is to identify the one table that generates the most ad-hoc data requests on your team, deploy Cortex Analyst against it, and measure how many of those requests it handles without manual analyst intervention.

FAQ

What is Snowflake Cortex Analyst?

Snowflake Cortex Analyst is a managed AI service inside the Snowflake Data Cloud that converts plain-English questions into SQL queries and returns answers against your structured Snowflake data. It is powered by a large language model and uses a semantic model YAML file to understand your specific tables and business terminology. Business users interact through a chat interface without needing to write SQL.

How do I create a semantic model for Cortex Analyst?

A semantic model is a YAML file that describes your Snowflake tables, columns, and metrics in business language. For each table, you write a description of its grain (what one row represents), then describe each column with a full sentence and include sample values for categorical fields. You upload the YAML file to a Snowflake internal stage, and Cortex Analyst reads it at query time. The quality of your descriptions is the main factor in answer accuracy.

Does Snowflake Cortex Analyst require SQL knowledge to use?

End users do not need SQL knowledge. They interact through a plain-English chat interface and receive direct answers. The person setting up Cortex Analyst needs SQL knowledge to create the stage and load data, but the ongoing use requires no SQL. Writing the semantic model YAML requires understanding the data structure but not writing SQL queries.

How accurate is Snowflake Cortex Analyst?

Accuracy depends primarily on the quality of the semantic model YAML. Tables with precise column descriptions, sample values for categorical columns, and a stated grain in the table description achieve high accuracy on common question types. Adding verified queries for the most frequent question patterns improves accuracy further. The main causes of inaccuracy are ambiguous column names and missing descriptions.

What is the difference between Cortex Analyst and Cortex Search in Snowflake?

Cortex Analyst is designed for structured relational data. It generates SQL to answer quantitative business questions such as totals, averages, and breakdowns by category. Cortex Search is designed for unstructured or semi-structured data such as documents, emails, and free-text fields. It uses semantic retrieval rather than SQL. Use Cortex Analyst for numeric and categorical table data; use Cortex Search for document libraries and text-heavy data.