How to Build a Data Dashboard with Streamlit

Last updated Apr 29, 2026

Streamlit converts a Python script into a live web dashboard without any HTML, JavaScript, or server setup. Write Python, save the file, and Streamlit runs a local web server that renders your code as an interactive app. The framework was built to help data scientists share analysis without waiting for an engineering team to build a frontend. It now supports over 1,500 community components and deploys for free via Streamlit Community Cloud, which provides a public URL anyone can visit.

Prerequisites

You need Python 3.9 or higher and pip installed. Verify your version:

python --version

Anything from Python 3.9 through 3.14 works with Streamlit 1.56.0, the current release as of April 2026. You also need a free GitHub account if you plan to deploy to Community Cloud at the end of this guide.

Install Streamlit

Create a project folder, set up a virtual environment, and install the required packages:

mkdir sales-dashboard && cd sales-dashboard

python -m venv .venv

source .venv/bin/activate # Windows: .venv\Scripts\activate

pip install streamlit pandas plotly

Confirm the install worked:

streamlit hello

Your browser should open a demo app at localhost:8501. Close it when ready to continue. Record your dependency versions with pip freeze > requirements.txt — you will need this file when deploying.

Load Your Data

Create a file called app.py. The simplest version loads a CSV and renders it as a table:

import streamlit as st

import pandas as pd

st.title("Sales Dashboard")

df = pd.read_csv("sales.csv")

st.dataframe(df)

Run it with streamlit run app.py and your browser opens the live app.

Add the @st.cache_data decorator immediately. Without it, Streamlit reloads the CSV on every user interaction. For a 50,000-row file this adds roughly 200 to 400 milliseconds of lag on each widget click:

@st.cache_data

def load_data(path):

return pd.read_csv(path)

df = load_data("sales.csv")

Cached data persists across reruns until the file path or function signature changes. This is the single most impactful performance improvement for CSV-backed dashboards.

Add Sidebar Filters

The sidebar lets users slice data without cluttering the main panel. Add a multi-select for region and a date range picker:

st.sidebar.header("Filters")

regions = st.sidebar.multiselect(

"Region",

options=df["region"].unique(),

default=df["region"].unique()

)

date_range = st.sidebar.date_input(

"Date range",

value=[df["date"].min(), df["date"].max()]

)

filtered = df[

(df["region"].isin(regions)) &

(df["date"] >= str(date_range[0])) &

(df["date"] <= str(date_range[1]))

]

st.dataframe(filtered)

st.caption(f"{len(filtered):,} rows matched")

Streamlit reruns the entire script from top to bottom each time a widget changes. This stateless execution model makes the framework straightforward to reason about — every render is a fresh Python execution with the current widget values injected.

Build Charts with Plotly

For dashboards users return to regularly, interactive Plotly charts are worth the extra lines. Users can zoom, hover for exact values, and export to PNG without any additional setup:

import plotly.express as px

monthly = (

filtered

.assign(month=pd.to_datetime(filtered["date"]).dt.to_period("M").astype(str))

.groupby("month", as_index=False)["revenue"]

.sum()

)

fig = px.bar(

monthly,

x="month",

y="revenue",

title="Monthly Revenue",

labels={"month": "Month", "revenue": "Revenue (USD)"}

)

st.plotly_chart(fig, use_container_width=True)

Add a metrics row above the chart using st.columns and st.metric. Three numbers side by side give users immediate context before they reach the visualization:

col1, col2, col3 = st.columns(3)

col1.metric("Total Revenue", f"USD {filtered['revenue'].sum():,.0f}")

col2.metric("Orders", f"{len(filtered):,}")

col3.metric("Avg Order Value", f"USD {filtered['revenue'].mean():,.0f}")

For line charts over time or scatter plots comparing two metrics, Plotly Express covers most use cases with a single function call. The full chart gallery is at plotly.com/python.

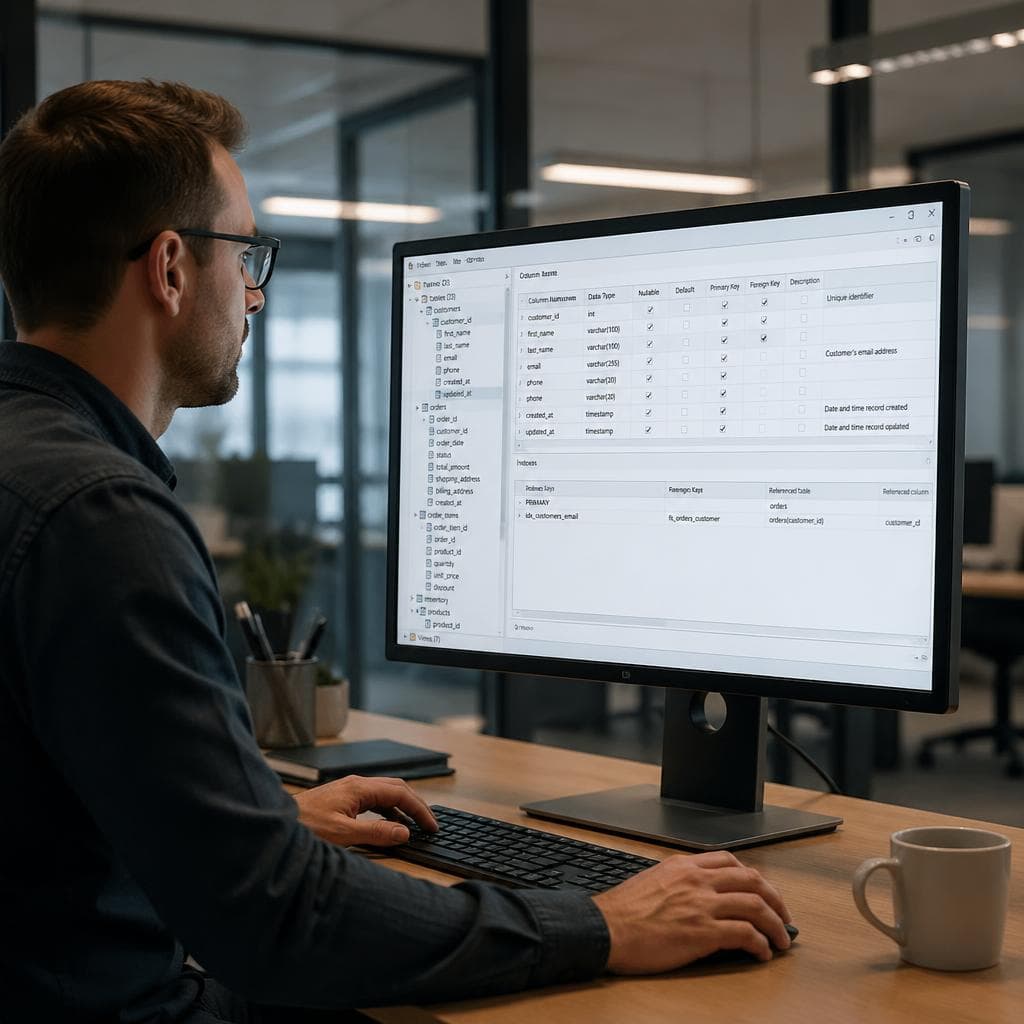

Connect to a Live Database

Static CSV dashboards go stale. For live data, connect through SQLAlchemy and let Streamlit manage the connection pool:

from sqlalchemy import create_engine

@st.cache_resource

def get_engine():

return create_engine(st.secrets["DATABASE_URL"])

df = pd.read_sql(

"SELECT * FROM sales WHERE date >= current_date - 30",

get_engine()

)

Store DATABASE_URL in .streamlit/secrets.toml locally — this file is gitignored by default so credentials never reach GitHub. On Community Cloud, paste the same key-value pairs into the Secrets panel before deploying.

The @st.cache_resource decorator keeps the engine connection alive across reruns rather than opening a new connection on every page load. Without it, a dashboard with ten concurrent users can open ten simultaneous connections and hit pool limits on free-tier databases.

If you want answers from your data without building and maintaining a dashboard, VSLZ lets you upload a CSV or connect a data source and run end-to-end analysis from a plain-English prompt.

Deploy to Streamlit Community Cloud

Community Cloud provides free public hosting. The deploy takes under five minutes:

- Push your project to a public GitHub repository. The repository must include

requirements.txtlistingstreamlit,pandas, andplotly. - Visit

streamlit.io/cloudand sign in with GitHub. - Click New app, select your repository and branch, and set the main file path to

app.py. - Paste any secrets into the Secrets panel, then click Deploy.

Community Cloud builds the app, installs dependencies, and provides a public URL in the format https://[username]-[repo]-app-[hash].streamlit.app. Every push to the main branch triggers an automatic rebuild. There is no container to manage or server to monitor.

For teams that need private apps with SSO and custom domains, Streamlit Workspaces is the paid tier. The open-source framework and Community Cloud hosting are both free.

What Changed in Streamlit 1.56.0

The April 2026 release added three features worth knowing. st.menu_button renders a dropdown button with a popover container, useful for action menus in toolbars without adding sidebar clutter. st.iframe embeds external URLs or raw HTML inline, which is helpful if existing reports live in Tableau or another tool and you want to wrap them in a Streamlit layout. Widgets like st.selectbox and st.multiselect now support a filter_mode parameter so users can type to search a long list rather than scrolling through hundreds of values.

Version 1.55.0 from March 2026 added on_change support to dynamic containers: st.tabs, st.popover, and st.expander can now trigger a callback when opened or closed, enabling conditional UI logic without custom JavaScript.

Summary

A working Streamlit dashboard covers four elements: data loading with caching, sidebar filters that reshape a dataframe, at least one interactive chart that updates with the filter state, and summary metrics at the top. The full workflow from an empty folder to a deployed public URL typically takes 30 to 60 minutes depending on data complexity. For ongoing dashboards, connecting to a live database via SQLAlchemy and storing credentials in .streamlit/secrets.toml keeps the data current without manual CSV exports.

FAQ

Is Streamlit free to use?

Yes. The Streamlit framework is open-source under the Apache 2.0 license and free to use. Streamlit Community Cloud provides free public hosting for apps connected to a GitHub repository. A paid tier called Streamlit Workspaces adds private deployment, SSO, custom domains, and higher resource limits.

Can Streamlit connect to PostgreSQL or MySQL?

Yes. Use SQLAlchemy to create a database engine and pass it to pandas.read_sql. Store the connection string in .streamlit/secrets.toml locally or in the Secrets panel on Community Cloud. The @st.cache_resource decorator keeps the connection pool alive across reruns, avoiding excessive connections on concurrent user sessions.

What is the difference between @st.cache_data and @st.cache_resource?

Use @st.cache_data for serializable objects that can be safely copied, such as DataFrames, lists, and dictionaries. Streamlit stores a separate cached copy per unique set of function arguments. Use @st.cache_resource for shared singleton objects that cannot or should not be copied, such as database engine connections, machine learning models, and API clients. Cache resource objects are shared across all users and sessions.

How do I add login authentication to a Streamlit app?

Community Cloud supports Google OAuth and GitHub authentication through app settings. For custom username and password login, the streamlit-authenticator community package adds hashed credential management stored in a YAML file. For enterprise SSO, Streamlit Workspaces integrates with identity providers including Okta, Azure AD, and Google Workspace.

How many concurrent users can a free Streamlit Community Cloud app handle?

Community Cloud does not publish a hard concurrent user limit for free apps, but free-tier resources are shared. Apps with more than 10 to 20 simultaneous active users may hit memory or CPU limits and become slow or unresponsive. For production dashboards with larger audiences, Streamlit Workspaces or self-hosting on a cloud VM provides dedicated resources and guaranteed availability.