How to Set Up OpenMetadata for Data Discovery

Last updated Apr 29, 2026

OpenMetadata solves a problem every data team hits eventually: no one knows which tables are live, who owns them, or what the columns actually mean. The platform ingests metadata automatically from your existing databases, warehouses, and BI tools, then presents everything in a searchable catalog with column-level lineage, quality signals, and owner assignments. This guide walks through a local Docker setup, connecting your first data source, and running your first ingestion.

What OpenMetadata Does

OpenMetadata is an open-source metadata platform maintained by Collate, the commercial entity behind the project. When you have tables scattered across Postgres, BigQuery, Snowflake, and dashboards in Redash or Tableau, OpenMetadata pulls structural information from each system into a unified catalog without moving your data.

As of 2026, the project has 90+ connectors covering warehouses, data lakes, BI tools, streaming platforms, and ML models. It is used in production at companies including Walmart, Airbus, and Netflix. The catalog surface includes column definitions, sample data, profiling statistics, ownership, tags, and full lineage from raw source to final dashboard.

Prerequisites

Before starting, confirm you have:

- Docker Desktop version 23 or later installed

- At least 6 GB of RAM allocated to Docker (configure in Docker Desktop > Settings > Resources)

- At least 4 vCPUs allocated to Docker

- Roughly 5 GB of free disk space for the initial image pull

The most common first-run failure is insufficient RAM. If OpenMetadata starts and immediately exits, run docker stats and increase your Docker memory limit before retrying.

Step 1: Create a Working Directory and Download the Compose File

Create a dedicated directory and download the default Docker Compose file:

mkdir openmetadata && cd openmetadata

curl -sL https://github.com/open-metadata/OpenMetadata/releases/latest/download/docker-compose.yml -o docker-compose.yml

OpenMetadata ships with two database backend options: MySQL (default) and PostgreSQL. For most first-time setups, the MySQL file works well. If your team already runs PostgreSQL, substitute docker-compose-postgres.yml to keep your internal stack consistent.

Step 2: Start the Stack

docker compose --env-file ./env-mysql up --detach

This command pulls and starts four containers: the OpenMetadata application server, Apache Airflow (used to schedule ingestion pipelines), Elasticsearch (for full-text catalog search), and MySQL (the metadata store). The initial image pull typically takes 3 to 8 minutes on a standard broadband connection.

Verify all containers are running:

docker compose ps

All four containers should show status Up. Airflow can take 60 to 90 seconds longer than the others to initialize. If it shows Up (starting), wait one minute and check again.

Step 3: Log Into the UI

Once all containers are running, open a browser and navigate to http://localhost:8585. The default credentials are:

- Username:

admin - Password:

admin

Change these immediately in Settings > Team and User Management > Users if the instance is on any shared or internet-accessible machine.

The first login triggers a brief onboarding wizard. Completing it sets your organization name and creates the initial admin profile, both of which are referenced when you later assign data asset owners.

Step 4: Connect Your First Data Source

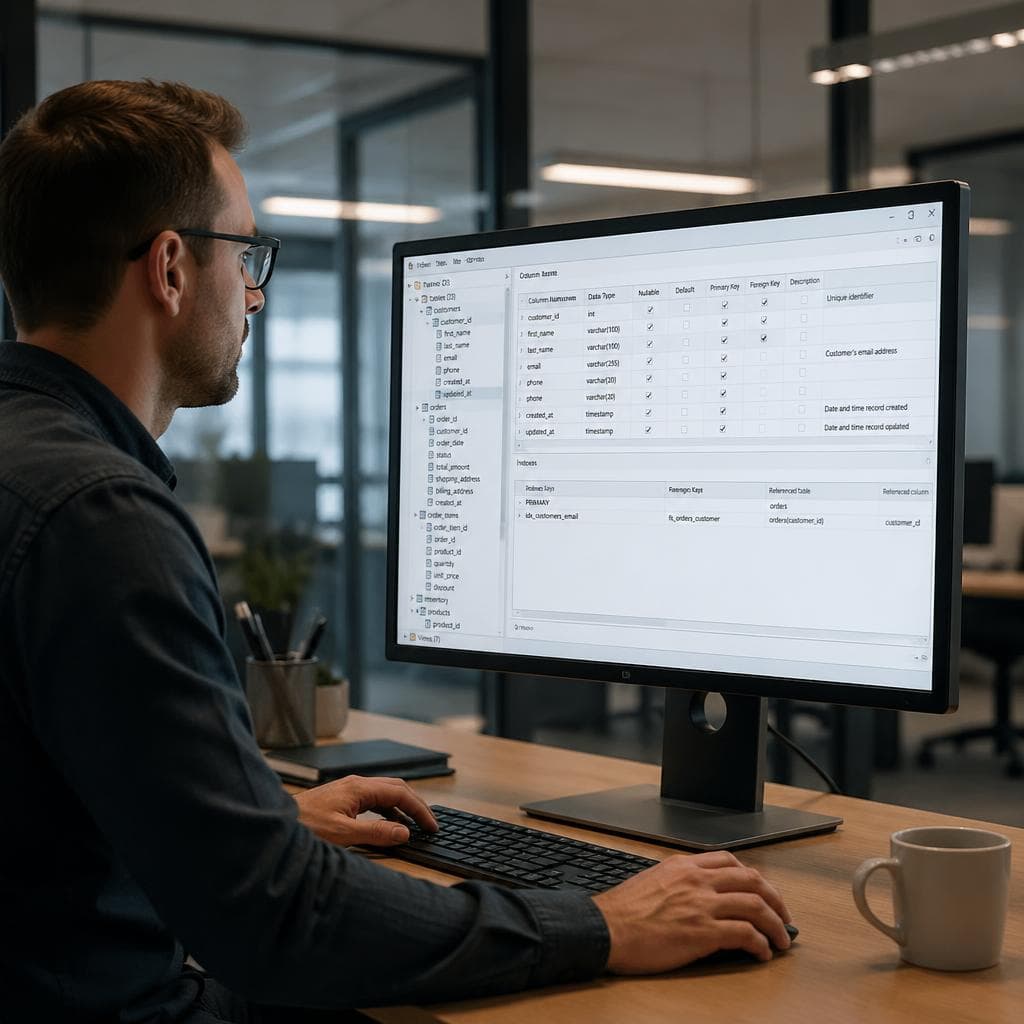

Go to Settings > Services > Databases, then click Add New Service. OpenMetadata calls each external system a Service, and each Service type has its own connector.

For a PostgreSQL database, select the PostgreSQL connector and provide:

- Host and port (for example,

your-db-host:5432) - Username and password for a read-only user

- Target database name

For cloud warehouses like BigQuery, the connector asks for a service account JSON key. For Snowflake, it requests account identifier, warehouse, and role. Each connector includes inline documentation listing the exact permissions required.

Best practice: connect a non-critical analytics replica or create a dedicated read-only database user rather than using production admin credentials. Metadata ingestion only reads schema information and, optionally, small sample row counts. No data is written or moved.

Step 5: Create and Run an Ingestion Pipeline

After saving the Service, OpenMetadata prompts you to create an Ingestion Pipeline. An ingestion pipeline is a scheduled job, powered by Airflow, that periodically pulls fresh metadata from your source and updates the catalog.

For an initial setup:

- Select Metadata Ingestion as the pipeline type

- Enable "Mark Deleted Tables" so dropped tables are flagged in the catalog rather than silently removed

- Set the schedule to run daily (a cron expression of

0 0 * * *works) - Click Deploy, then trigger a manual run immediately from the Ingestion tab

A successful first run on a database with under 500 tables typically completes in 30 seconds to 2 minutes. Once it finishes, every table, view, and schema from that database appears in the Explore view.

Step 6: Browse and Search the Catalog

Navigate to Explore in the top navigation. Every ingested table appears here with:

- Full schema listing with column names, data types, and nullable flags

- Sample data rows (requires SELECT permissions on the source)

- Profiling stats: row counts, null percentages, unique value distributions

- Column-level lineage (populated once you connect a second source like dbt or Tableau)

- Owner field, custom tags, and a description editor

The search bar is full-text and Elasticsearch-backed. Searching for "revenue" returns every table, column, and dashboard containing that term in its name, description, or tag set. In a team of 10 analysts, this single feature reduces the average time to locate a trusted data asset from hours down to under 30 seconds.

Step 7: Add Owners and Descriptions

Ingested metadata without human context is a lookup directory, not a working catalog. After your first ingestion run, spend 15 minutes assigning owners to your top 10 most-queried tables and writing a one-paragraph description for each.

To add an owner, open any table page, click the Owner field, and search for any user who has logged into OpenMetadata. Once assigned, every team member who finds that table in search sees exactly who to contact for access, quality concerns, or schema questions.

Tag tiers work the same way. Creating a Bronze/Silver/Gold tag scheme to reflect data quality levels gives downstream analysts an instant signal about which tables are safe to use in production dashboards.

If you want help identifying which tables are being queried most often before you start documenting, VSLZ AI can read your data sources directly and surface usage patterns from a single prompt, helping you prioritize which assets to document first.

Connecting Additional Sources

Once your first source is ingested, add more from the Services panel. OpenMetadata connectors cover the main categories:

- Data warehouses: BigQuery, Snowflake, Redshift, Databricks

- Relational databases: PostgreSQL, MySQL, SQL Server, Oracle

- BI tools: Tableau, Looker, Metabase, Power BI, Redash

- Transformation layers: dbt (populates lineage and test results automatically)

- Object storage: S3, GCS, Azure Data Lake

Lineage becomes useful once at least two sources are connected. If a BigQuery staging table feeds a dbt model that powers a Tableau dashboard, OpenMetadata draws the full chain automatically. This kind of impact analysis previously required expensive enterprise data governance tools or manual documentation that inevitably goes stale.

Practical Summary

A working OpenMetadata instance takes roughly 30 minutes from Docker installation to first successful ingestion. The catalog delivers immediate value for new team member onboarding and cross-team data discovery. The deeper value, lineage tracking and quality-signal propagation, builds over the following weeks as you connect more sources and teams adopt the catalog as their first stop before writing a new query or building a new dashboard. Start with your most business-critical database, assign owners to the top 10 tables, and run daily ingestion so the catalog reflects your live schema without manual updates.