How to Set Up Lightdash with dbt

Last updated Apr 24, 2026

Lightdash is an open-source BI tool designed around one idea: your dbt models are already your semantic layer. Instead of rebuilding metric definitions in a separate BI tool, Lightdash reads the field descriptions, metric calculations, and relationships you already wrote in your dbt YAML files. Setup takes under an hour. Install with Docker Compose, connect your warehouse and dbt project, add column definitions to your YAML, and your models become queryable charts and dashboards that business users can explore without SQL.

What Makes Lightdash Different from Metabase and Superset

Metabase and Apache Superset let you point at any database table and start building charts. That flexibility is useful, but it means metric definitions live only inside the BI tool. If an analyst changes the revenue calculation in dbt, Metabase does not know. Teams end up with two competing definitions of the same number.

Lightdash avoids this by requiring a dbt project as the foundation. Every dimension and metric in Lightdash is backed by a dbt model column definition. When your dbt YAML changes, Lightdash picks up the change on the next sync. There is one source of truth.

The trade-off is a higher setup requirement. Lightdash is not the right tool if you do not already have dbt. But for teams running dbt in production, it eliminates an entire category of data trust problems.

As of April 2026, Lightdash has over 4,000 GitHub stars and ships weekly releases. The self-hosted community edition is free and includes nearly the full feature set.

Prerequisites

Before starting you need:

- A working dbt project (Core or Cloud) with at least a few tested models

- Docker and Docker Compose installed on your machine or server

- Warehouse credentials for BigQuery, Snowflake, Redshift, PostgreSQL, DuckDB, or Databricks

If you want to evaluate Lightdash before connecting your own project, the Lightdash team provides a public demo dbt project called Jaffle Shop that you can use for the full walkthrough.

Step 1: Install Lightdash with Docker Compose

Clone the repository and copy the example environment file:

git clone https://github.com/lightdash/lightdash

cd lightdash

cp .env.example .env

Open .env and set SECRET_KEY to a random 32-character string. This key encrypts the warehouse credentials stored in Lightdash's metadata database. Do not skip this step or use the placeholder value in production.

Start all services:

docker compose up -d

This starts four containers: the Lightdash application server, a PostgreSQL database for metadata, Redis for caching and queuing, and a headless Chromium browser for scheduled PDF report exports. On a first run, pulling all images takes 3 to 5 minutes.

Once containers are running, navigate to http://localhost:8080 and complete the registration form to create the admin account. If you are deploying on a remote server rather than locally, replace localhost with your server's IP address or hostname.

Step 2: Connect Your dbt Project

Lightdash offers three ways to connect a dbt project. You select one in the project setup wizard.

dbt Core (local directory): Choose the local path option and enter the absolute path to the directory containing your dbt_project.yml. Lightdash runs dbt compile inside its container using this path. This works well for local development and for servers where you maintain the dbt project as a cloned repository.

dbt Cloud: Enter your dbt Cloud API token and the environment ID for the environment you want to use. Lightdash fetches compiled manifest files directly from dbt Cloud. No local project files needed on the Lightdash host.

GitHub or GitLab: Connect a repository. Lightdash clones and compiles the project on a schedule you configure. This is useful for teams that want Lightdash to always reflect the latest merged code without manually triggering a sync.

After choosing your connection method, enter your data warehouse credentials. Lightdash runs a test connection before saving. If the test fails, the two most common causes are IP allowlist restrictions (your warehouse is blocking the Docker host's IP) and insufficient service account permissions. For BigQuery, the service account needs BigQuery Data Viewer and BigQuery Job User roles at minimum.

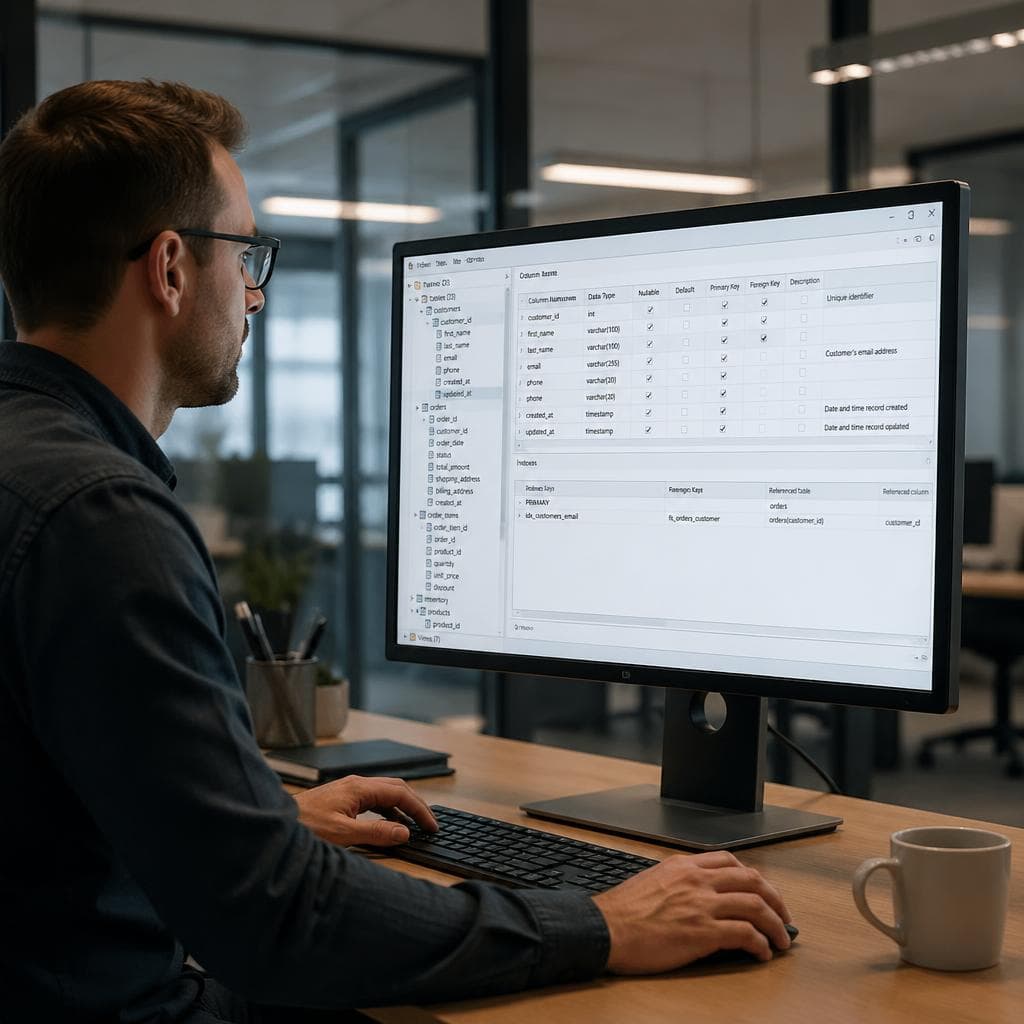

Step 3: Define Dimensions and Metrics in Your dbt YAML

Once connected, Lightdash reads your dbt model YAML files to populate its field catalog. Models with no column definitions generate only a sparse set of auto-detected fields. To get full value, add meta blocks to your model columns.

Here is a practical example for an orders model:

models:

- name: orders

description: One row per customer order

columns:

- name: order_id

description: Unique order identifier

meta:

dimension:

type: string

hidden: true

- name: created_at

description: Timestamp when the order was placed

meta:

dimension:

type: timestamp

time_intervals: [DAY, WEEK, MONTH]

- name: status

description: Order status (pending, shipped, delivered, returned)

meta:

dimension:

type: string

- name: revenue

description: Order revenue in USD

meta:

metrics:

total_revenue:

type: sum

label: Total Revenue

average_order_value:

type: average

label: Average Order Value

order_count:

type: count_distinct

sql: order_id

label: Number of Orders

After updating your YAML, run the Lightdash CLI to push changes:

npx lightdash dbt run

Or, if you are connected via dbt Cloud or Git, trigger a manual refresh in the Lightdash project settings. The field catalog updates without a page reload.

Start with the ten to fifteen fields your team queries most often. You do not need to define every column in every model before going live.

Step 4: Explore Data and Build Dashboards

In the Lightdash UI, go to Explore and select a model. The left panel shows all dimensions and metrics you defined. Click any combination to run an automatic query and preview results in a table. Switch to a chart view and select the chart type: bar, line, scatter, pie, or a simple big number.

Lightdash auto-generates SQL from your selections and displays it in a collapsible panel. This is useful for validating that the query logic matches your intent before sharing the chart with stakeholders.

Save any chart to a dashboard. Dashboards can have multiple charts and a date filter that applies across all charts at once, useful for weekly or monthly reporting views.

Sharing options: generate a view-only public link (recipients do not need a Lightdash account), or share to specific team members. Scheduled email delivery and Slack alerts are available on the Lightdash Cloud plan.

Step 5: Manage User Access

Lightdash uses role-based access control with five roles: Viewer, Interactive Viewer, Editor, Developer, and Admin.

For most business stakeholders, assign Viewer. They can open dashboards and filter data but cannot modify charts. Analysts who build charts get Editor. Developers who connect new dbt projects or manage project settings need the Developer or Admin role.

To add users, go to Settings > User Management and send invite emails. For invite emails to arrive, configure your SMTP credentials in .env before starting the containers. The relevant variables are EMAIL_SMTP_HOST, EMAIL_SMTP_PORT, EMAIL_SMTP_USER, and EMAIL_SMTP_PASSWORD.

Common Issues and Fixes

No models appear after connecting: Lightdash found the dbt project but dbt compilation failed silently. Check the container logs with docker compose logs lightdash for dbt error output. Common causes are missing dbt profile credentials or a model with a compilation error.

Charts return no data: The query ran but returned zero rows. Check the date filter on the Explore page. Lightdash applies a default date filter to timestamp dimensions, and if the range does not match your data, results come back empty. Clear the filter or adjust the range.

Slow chart load times: Lightdash pushes all computation to your data warehouse. A slow chart means the underlying SQL is expensive. Add clustering keys, partitioning, or materialized views to the dbt models powering those charts.

Invite emails not arriving: SMTP is not configured. Either configure it in .env and restart the containers, or copy invite links directly from the User Management page and send them manually.

Practical Summary

A working Lightdash deployment requires four things in place: Docker Compose running all four containers, a dbt project connected via local path, dbt Cloud, or Git, warehouse credentials that pass the connection test, and at least one dbt model with column meta blocks defining dimensions and metrics. Most teams reach their first working dashboard within 45 minutes of starting the install.

After launch, the main ongoing work is expanding YAML field definitions as new models are added and granting access to business users. Lightdash does not require any configuration changes when you add new dbt models; run the sync command and new tables appear in the Explore panel automatically.

For teams that are not yet running dbt and need to start exploring data from uploaded files today, VSLZ AI handles that from a file upload with no configuration: upload a CSV or spreadsheet, describe what you want in plain English, and get charts, statistical summaries, and pivot tables in return.

FAQ

Does Lightdash work without dbt?

No. Lightdash requires a dbt project as its foundation. It reads your dbt model YAML files to generate the field catalog that powers the Explore interface. If you do not have a dbt project, you need to set one up before Lightdash will be useful. Lightdash provides a sample dbt project called Jaffle Shop that you can use for evaluation purposes.

Is Lightdash free to self-host?

Yes. The Lightdash community edition is free and open source (MIT license). It includes nearly the full feature set: all chart types, dashboards, user management, and warehouse connections. The paid Lightdash Cloud plan adds scheduled email and Slack deliveries, SSO, and managed hosting.

Which data warehouses does Lightdash support?

As of April 2026, Lightdash supports BigQuery, Snowflake, Redshift, PostgreSQL, DuckDB, Databricks, Trino, and ClickHouse. You select the warehouse type during project setup and provide the connection credentials. Lightdash tests the connection before saving.

How does Lightdash compare to Metabase for dbt users?

Metabase can connect to any database table without a dbt project and is easier to get running for teams with no existing dbt setup. Lightdash requires dbt but in return reads your metric definitions directly from dbt YAML, keeping metric logic in one place. Teams already running dbt in production typically find Lightdash produces fewer data trust issues because there is no second semantic layer to maintain separately.

Can Lightdash handle large datasets?

Lightdash pushes all computation to your data warehouse. It does not load data into its own storage. Performance depends entirely on your warehouse's query speed and the efficiency of your dbt model SQL. Lightdash adds no query overhead beyond generating the SQL and returning results to the browser.