How to Use ThoughtSpot Spotter

Last updated Mar 28, 2026

ThoughtSpot Spotter is a conversational AI analyst built into the ThoughtSpot platform. To use it, connect a cloud data warehouse, let a data engineer build worksheets over your tables, then open Spotter and type a plain-English question. Spotter translates your question to an analytical query, returns a chart or table, and lets you drill deeper through follow-up conversation. No SQL required. Most teams have their first answer within a day of setup.

What ThoughtSpot Spotter Actually Does

Traditional BI tools require you to build a dashboard before you can see an answer. ThoughtSpot inverts this. Spotter sits at a search bar and waits for a question. When you type "show me revenue by region for last quarter," it parses the question using ThoughtSpot search tokens rather than raw SQL, checks a governed semantic layer, and returns a visualization in seconds.

The distinction matters. Text-to-SQL tools send your natural language to a language model and receive raw SQL back, which works until the model generates a subtly wrong join or ignores a business rule. ThoughtSpot's search-token approach keeps queries inside a schema that data engineers have already defined, so results stay consistent across users and over time.

Spotter also maintains conversation context. After seeing revenue by region, you can ask "break this down by product category" or "what changed compared to last year?" Spotter treats these as follow-ups rather than new queries. This makes exploration feel closer to talking with an analyst than navigating a menu-heavy dashboard.

Step 1: Connect a Data Source

ThoughtSpot Cloud supports connections to over 20 data warehouses, including Snowflake, Google BigQuery, Amazon Redshift, and Databricks. The connection is read-only; ThoughtSpot never moves or stores your data.

To set up a connection:

- In the ThoughtSpot Cloud console, go to Data > Connections and click Create connection.

- Select your warehouse type. For Snowflake, you will need the account URL, warehouse name, database, schema, and a service account with SELECT privileges on the tables you plan to analyze.

- ThoughtSpot tests the connection on save. If it fails, the most common causes are network allow-list rules (add ThoughtSpot IP ranges to your warehouse firewall) or a service account with insufficient permissions.

- Once connected, select the tables you want to expose. Start with the three to five tables your team queries most often.

For teams using Databricks Unity Catalog, ThoughtSpot reads the catalog's built-in column descriptions and lineage, which reduces manual modeling work downstream.

Step 2: Build Worksheets Over Your Tables

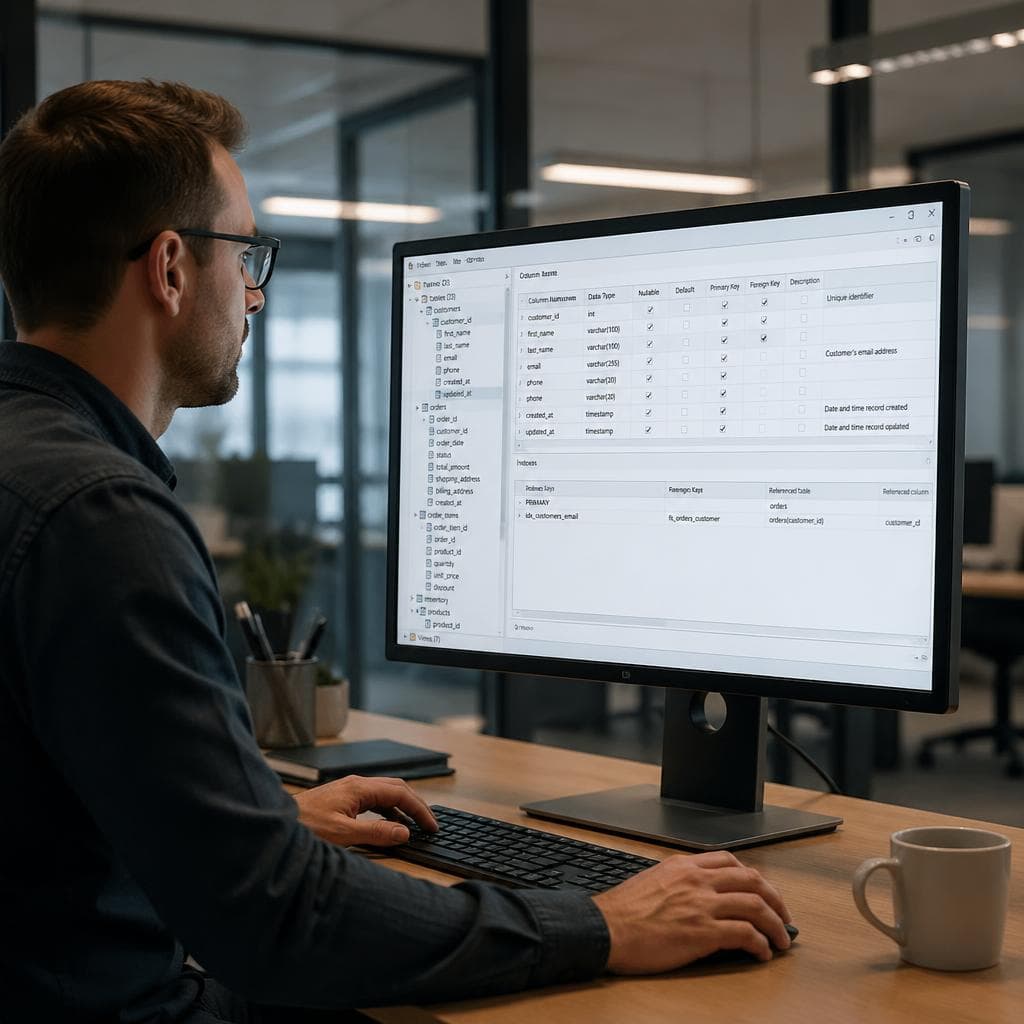

Raw tables rarely match how business users think about data. A transactions table might have a created_at field that business users call "sale date," or a user_id field that needs to join to a customers table.

Worksheets are ThoughtSpot's answer to this problem. A worksheet is a logical view over one or more tables that pre-defines joins, renames columns to business-friendly labels, and sets data types. Business users never see the underlying tables; they query the worksheet.

To build a worksheet:

- Go to Data > Worksheets > Create worksheet.

- Add the tables you connected in Step 1.

- Define joins between tables. For example, set transactions.customer_id equal to customers.id. Joined fields must share the same data type.

- Rename columns to plain-language labels. Change created_at to Sale Date and net_rev_usd to Net Revenue. These names are what users will type into Spotter.

- Add formulas for calculated fields, such as Sum(revenue) divided by Count(orders) for average order value.

- Share the worksheet with the teams who will query it. ThoughtSpot supports row-level security, so you can restrict which rows each user group sees.

A well-modeled worksheet is the most important factor in Spotter accuracy. If users get unexpected results, the first place to look is the worksheet column names and join definitions.

Step 3: Ask Your First Question

Once a worksheet is live, navigate to the Spotter tab. You will see a blank conversation panel and a text input.

Type a plain-English question. Good starting questions are specific and measurable: "What are the top 10 products by revenue this month?" or "Show me customer churn rate by acquisition channel for Q1 2026."

Spotter surfaces three things alongside its answer:

- A chart or table answering your question

- The search tokens it used, so you can verify the logic

- Confidence indicators showing which parts of the question matched cleanly versus which required inference

If the answer looks wrong, review the tokens. Often the issue is a date range defaulting to all-time instead of a specific period, or an aggregation applying to the wrong dimension. You can edit the tokens directly or rephrase your question.

According to ThoughtSpot documentation, Spotter's patented search model interprets raw data in context rather than relying purely on a large language model. This means it scans actual column values to confirm entity names before running the query. Asking about "EMEA" will correctly match however that region is labeled in your data, without hallucinating a definition.

Step 4: Deepen with Follow-Up Questions

Spotter maintains a running conversation state. After your first answer, you can continue the thread:

- "Filter to only enterprise customers"

- "What about the same period last year?"

- "Sort by growth rate instead"

- "Which sales rep has the highest conversion rate in this segment?"

Each follow-up refines or extends the previous query. This is where most users find the biggest productivity gain. Instead of building a new dashboard for each question, you iterate on a single conversation until you have the answer you need.

One practical note: Spotter resets context when you start a new conversation. If you close the chat and reopen it, you begin from scratch. Save important answers to a Liveboard before ending your session.

Step 5: Build a Liveboard with SpotterViz

A Liveboard is ThoughtSpot's version of a dashboard, but each visual on it is live rather than a static export. Any user with access can ask Spotter follow-up questions on a Liveboard chart directly from the board.

SpotterViz generates a complete Liveboard from a plain-language description of what you need. Type "Build me an executive sales dashboard with revenue by region, pipeline by stage, and top 10 accounts by ARR." SpotterViz identifies the right questions, queries the semantic layer, and assembles a structured board with narrative notes explaining the logic behind each chart.

To create a Liveboard manually from a Spotter answer:

- From any Spotter answer, click Pin to Liveboard.

- Create a new Liveboard or add to an existing one.

- Repeat for each chart you want to include.

- Add summary notes or text blocks to give the board context for readers.

- Set a refresh schedule: daily, hourly, or real-time for streaming sources.

Liveboards support filters you can apply across all visuals simultaneously. A single region dropdown filter cascades across every chart on the board, which is faster to build than filtering each chart individually.

Step 6: Govern Metrics with Spotter Semantics

For teams with multiple analysts, metric definitions diverge quickly. One team calculates "monthly active users" by counting unique logins; another counts sessions. Spotter Semantics addresses this with a Governed Metrics Catalog.

Analysts define canonical metrics in the catalog: the formula, the description, and the approved use cases. When anyone asks Spotter a question involving that metric, Spotter uses the catalog definition rather than an ad hoc calculation. This prevents the situation where sales and finance report different revenue figures from the same data.

The Spotter Semantics layer also routes queries intelligently. Aggregate awareness sends summary-level questions to pre-aggregated tables rather than scanning raw detail rows, which reduces warehouse compute costs on high-volume datasets. ThoughtSpot reported that this routing can cut query times significantly on datasets with more than one billion rows.

For Databricks and dbt users, Spotter Semantics reads dbt model documentation directly. If you have written descriptions for your dbt models, those descriptions transfer into ThoughtSpot's semantic layer with minimal re-configuration. ThoughtSpot also aligns with Open Semantic Interchange standards, making it compatible with other semantic layers in Snowflake and Databricks environments.

What ThoughtSpot Does Not Replace

ThoughtSpot is optimized for question-and-answer analytics on structured data in a cloud warehouse. It is not a data preparation tool. Transformations should happen upstream in dbt, Spark, or SQL before reaching ThoughtSpot. The platform also requires a modeled worksheet before Spotter can answer questions reliably; raw tables without business-friendly naming tend to produce poor results until a data engineer completes the initial setup.

For teams without a data engineer available to configure connections and worksheets, a simpler path is to start with a tool built for file-based querying. If you have a CSV or Excel file and want answers without warehouse configuration, VSLZ AI handles that from a file upload using a single plain-language prompt, with no connection setup required.

Summary

Getting useful results from ThoughtSpot Spotter requires three setup steps: a warehouse connection, a modeled worksheet, and shared access for users. After setup, the workflow is fast. Ask a question in natural language, verify the search tokens, refine through conversation, and pin charts to a Liveboard. Spotter Semantics and the Governed Metrics Catalog extend this into multi-team environments where consistent metric definitions matter. For teams already in Snowflake, BigQuery, Redshift, or Databricks, the integration is clean and the time to first answer is typically one business day after a data engineer completes the worksheet configuration.

FAQ

Do I need to know SQL to use ThoughtSpot Spotter?

No. ThoughtSpot Spotter accepts plain-English questions and translates them into queries automatically using its patented search-token model. You do not need to write SQL to get answers, build charts, or create Liveboards. SQL knowledge is optional and mainly useful if a data engineer wants to write custom worksheet formulas during the setup phase.

What data sources does ThoughtSpot connect to?

ThoughtSpot Cloud supports over 20 data warehouse connections, including Snowflake, Google BigQuery, Amazon Redshift, Databricks, Azure Synapse, and Starburst. The connection is read-only; ThoughtSpot queries your warehouse directly without copying or storing your data.

What is a ThoughtSpot Liveboard?

A Liveboard is ThoughtSpot's equivalent of a dashboard, but each chart on it remains live and queryable. Users can ask Spotter follow-up questions directly from any chart on a Liveboard. SpotterViz can also generate a complete Liveboard automatically from a plain-language description of the metrics you want to track.

How is Spotter different from ChatGPT for data analysis?

ChatGPT and similar general-purpose LLMs generate SQL or Python code from your question, which you then have to run separately against your data. ThoughtSpot Spotter connects directly to your live data warehouse, runs the query in real time, and returns a verified chart or table in the same interface. Spotter also uses a governed semantic layer so metric definitions stay consistent across all users, which general-purpose LLMs do not enforce.

What is Spotter Semantics and why does it matter?

Spotter Semantics is ThoughtSpot's AI-native semantic layer. It stores canonical definitions for your business metrics in a Governed Metrics Catalog, so every user gets the same answer when they ask about revenue, churn rate, or any other metric. It also routes queries to pre-aggregated tables when possible, reducing compute costs. For dbt users, Spotter Semantics reads existing dbt model documentation directly, reducing setup time.