How to Sync Your SaaS Data with Airbyte Cloud

Last updated Apr 23, 2026

Airbyte Cloud lets you pipe data from over 300 SaaS sources into a central database on a schedule, without Docker, servers, or Python. Most teams reach for it when manual CSV exports become a weekly chore or when they need last night's revenue data available every morning without manual steps. This guide covers the full setup using the managed cloud version, which removes the infrastructure overhead of the open-source self-hosted edition.

What Airbyte Cloud Does and When It Makes Sense

Airbyte Cloud acts as a managed pipeline: it pulls data from a source (HubSpot, Stripe, Google Ads, Salesforce, Postgres, and hundreds more) and loads it into a destination (BigQuery, Snowflake, Postgres, Redshift) on a schedule you set. You configure everything through a web interface.

The cloud version differs from the open-source Airbyte in one key way: you do not manage any infrastructure. Connector updates, authentication token refreshes, and compute capacity are handled by Airbyte. That makes it the correct choice for small teams without a dedicated data engineer.

Use Airbyte Cloud when you need automated, recurring syncs. If you analyze data occasionally with one-off exports, the setup overhead is not worth it. If you want a reliable, self-updating pipeline that keeps your database current, it is.

Step 1: Create an Airbyte Cloud Account

Go to cloud.airbyte.com and sign up. The 30-day free trial requires no credit card. After email verification you land in a workspace, which is the container for all your sources, destinations, and connections. A source is where data originates; a destination is where it lands; a connection is the scheduled pipeline between them.

Step 2: Add a Source Connector

Click Sources in the left sidebar, then New source. Use the search box to find your tool. Airbyte Cloud ships with over 300 pre-built connectors, including every major CRM, ad platform, payment processor, and database.

For this guide, HubSpot is the example. Select it and fill in the configuration form:

- Source name — a label you choose, such as "HubSpot Production"

- Authentication — click Authenticate and log in with your HubSpot account. OAuth handles the token automatically.

- Start date — the earliest date to pull historical records from. Set this as far back as your plan allows if you want full history.

Click Set up source. Airbyte runs a connection test. On success you see a green checkmark and a list of available streams (tables). HubSpot exposes around 35 streams, including contacts, companies, deals, emails, line_items, and owners.

Step 3: Add a Destination

Click Destinations, then New destination.

BigQuery is the most common starting point for teams without an existing warehouse. Google provides $300 in free credits and the free tier covers substantial query volume. PostgreSQL is better if you already have a managed Postgres database on Supabase, Railway, or Render and want to minimize cost.

For a PostgreSQL destination, the fields are:

- Host, port, database name, username, password — from your database provider's dashboard

- Default schema — the schema where Airbyte writes its tables (e.g.,

airbyte) - SSL mode — set to

requireif your provider enforces SSL, which most managed providers do

Click Set up destination. Airbyte tests the connection and confirms it can write.

Step 4: Create a Connection and Choose Streams

Click Connections, then New connection. Select your source and destination from the dropdowns.

The stream selection screen shows every available data stream from the source. You can sync all of them or pick a subset. For a HubSpot-to-Postgres pipeline focused on sales reporting, a reasonable starting selection is contacts, deals, companies, deal_stages, and owners.

For each stream, choose a sync mode:

- Full refresh | Overwrite — replaces the destination table entirely on every sync. Simple, but slow and expensive on large datasets.

- Incremental | Append — pulls only records created or updated since the last sync, appending new rows. Faster and cheaper for large tables.

- Incremental | Append + Dedup — like append, but deduplicates on a primary key so each destination table holds one row per record. Best for CRM objects that get updated frequently, such as contacts and deals.

For CRM data, use Incremental + Append + Dedup on all main objects. Use Full refresh only for small reference tables like deal_stages and owners, which change infrequently and have few rows.

Step 5: Set the Sync Schedule

Below the stream selector, configure how often the pipeline runs. The free trial supports syncs as frequent as every 6 hours; paid plans go down to every hour.

For sales and revenue reporting, a daily sync at 6 AM local time covers most use cases. The first sync runs immediately after you click Set up connection.

For a HubSpot account with 5,000 contacts and 2,000 deals, the initial historical sync typically takes 4 to 10 minutes. Subsequent incremental syncs for the same account run in under 90 seconds.

Step 6: Verify the Output in Your Destination

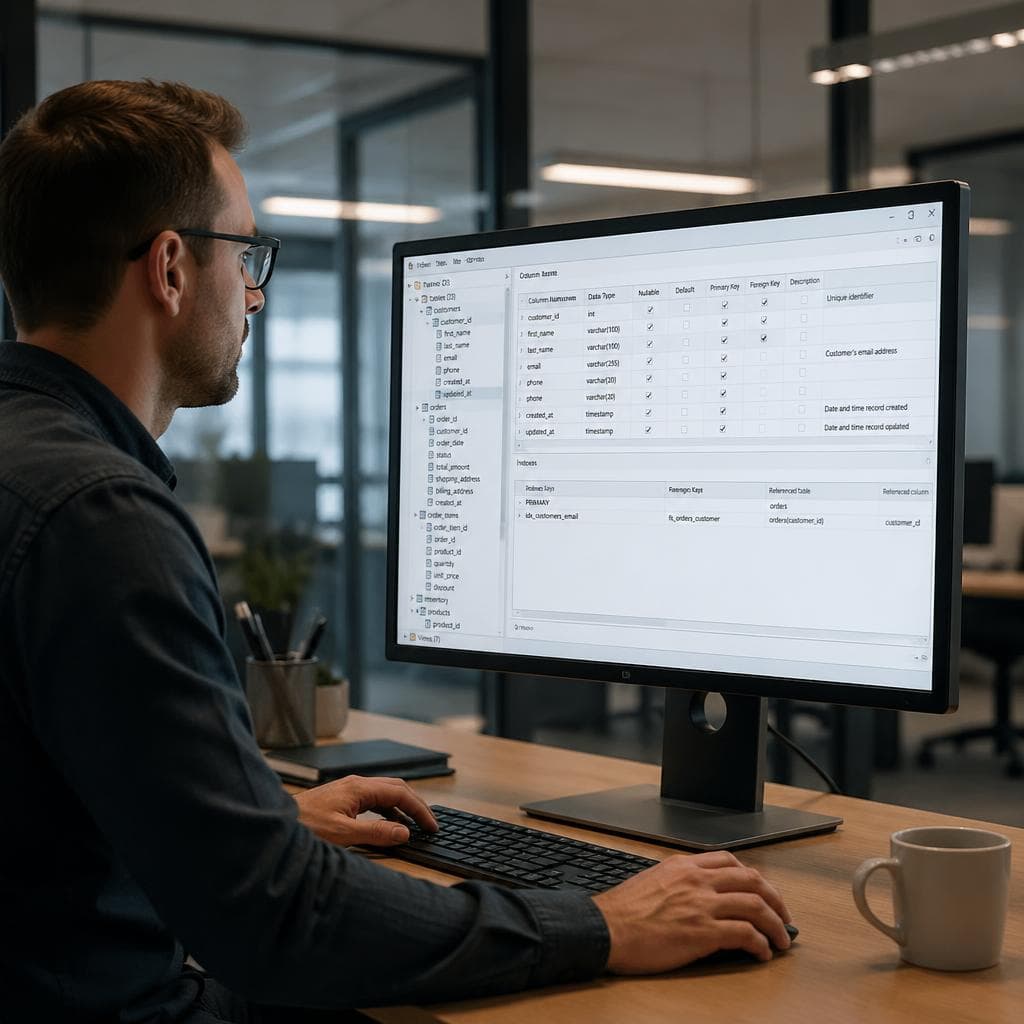

Once the first sync completes, connect to your destination database and check the tables. In Postgres, Airbyte writes each stream to a table in the schema you specified. Table names match the stream names exactly.

Run a quick verification:

SELECT COUNT(*) FROM airbyte.contacts;

SELECT MAX(_airbyte_extracted_at) FROM airbyte.contacts;

The _airbyte_extracted_at column is added by Airbyte and records when each row was synced. If the row count matches your HubSpot contact count and the timestamp is within the last hour, the pipeline is working correctly.

Four Things Most Guides Skip

Most Airbyte tutorials end at the first successful sync. Four issues show up repeatedly in practice that guides tend to skip:

Schema drift. When a SaaS vendor adds a new field to their API, Airbyte can automatically add a corresponding column to your destination table. This is convenient but can break downstream queries that reference specific columns. In each connection's settings, find the schema change handling option. Set it to "Pause sync" rather than "Auto-propagate" if your downstream queries are rigid.

Deleted records. Incremental connectors sync created and updated records. If a contact is deleted in HubSpot, the row stays in your Postgres table indefinitely. For churn or suppression analysis, you need to either run a weekly full refresh on the affected stream or join against HubSpot's deleted contacts endpoint separately.

Partial sync failures. Airbyte sends email alerts for complete sync failures, but not for partial failures where some streams succeed and others do not. Check the Logs tab for each connection after the first week. A stream stuck on "pending" or showing zero records is not always caught by the alert system.

Connection token expiry. OAuth tokens for some sources expire after 60 to 90 days and require re-authentication. Airbyte usually handles this automatically, but a small number of connectors require manual re-authorization. If a sync starts failing after roughly two months with an authentication error, re-run the OAuth flow in the source configuration.

From Pipeline to Analysis

Once your data lands in a central database, the next step is connecting an analytics tool to it. SQL-native tools like Metabase and Grafana connect directly to Postgres and let you build dashboards on top of the synced tables without replicating data again.

For teams where the person doing the analysis is not the same person who set up the pipeline, a plain-English query layer is useful. Tools like VSLZ AI connect to your Postgres destination and let non-technical teammates ask questions in natural language, turning the synced tables into answers without writing SQL.

The Airbyte setup takes under 30 minutes. The result is a pipeline that keeps your database current automatically, replacing the weekly ritual of exporting CSVs and merging spreadsheets.

Summary

Airbyte Cloud removes the server overhead of the open-source edition and works through a browser-only setup. The key decisions in any pipeline are: which streams to include, which sync mode to use (Incremental + Dedup for most CRM objects), and how often to sync. After setup, monitor the Logs tab for partial failures, configure schema change handling to prevent silent column additions, and account for deleted records if that matters for your analysis. Once data is in your destination, any SQL-compatible BI or analytics tool can query it directly.

FAQ

Do I need to install anything to use Airbyte Cloud?

No. Airbyte Cloud is fully managed and runs in the browser. You do not need Docker, Python, or any local installation. You only need accounts with your data source (e.g., HubSpot) and your data destination (e.g., a PostgreSQL database or BigQuery project).

How is Airbyte Cloud different from the open-source Airbyte?

The open-source Airbyte requires you to run it yourself, typically using Docker Compose on a server or local machine. You manage infrastructure, updates, and uptime. Airbyte Cloud is a managed service that handles all of that automatically. The core connector library is the same, but the cloud version adds managed scheduling, automatic connector updates, and a built-in monitoring dashboard.

What destinations does Airbyte Cloud support?

Airbyte Cloud supports major data warehouses and databases as destinations, including BigQuery, Snowflake, Amazon Redshift, PostgreSQL, MySQL, and Azure Synapse. For teams without an existing warehouse, BigQuery with Google Cloud free credits is the lowest-cost starting point.

What is the difference between Full Refresh and Incremental sync?

Full Refresh replaces the entire destination table on every sync, pulling all records from the source each time. Incremental sync only pulls records created or updated since the last sync, making it much faster and cheaper on large datasets. For CRM data like contacts and deals that update frequently, Incremental with Append and Dedup is the recommended mode because it keeps only one row per record and does not re-process historical data on every run.

How do I handle deleted records in Airbyte?

By default, incremental connectors do not sync deletions. If a record is deleted in the source (e.g., a contact removed from HubSpot), the row remains in your destination table. To handle this, either switch the affected stream to Full Refresh mode so the table is rebuilt from scratch on each sync, or build a separate reconciliation query that identifies rows absent from the source. The right approach depends on how large the table is and how often records are deleted.